The verified Olostep app on Zapier gives you five actions inside Zapier’s visual builder: scrape a URL, get AI answers, batch-scrape thousands of URLs, crawl a site, or map all its links, then connect the results to any of 8,000+ apps. View on Zapier →Documentation Index

Fetch the complete documentation index at: https://docs.olostep.com/llms.txt

Use this file to discover all available pages before exploring further.

Before you start

- An Olostep account with an API key: get one free, no credit card required. Your first 500 credits are included.

- A Zapier account: any plan works. Free plans can run Zaps manually; paid plans support scheduled and multi-step Zaps.

- No coding required: everything in this guide is done through Zapier’s visual editor.

Setup

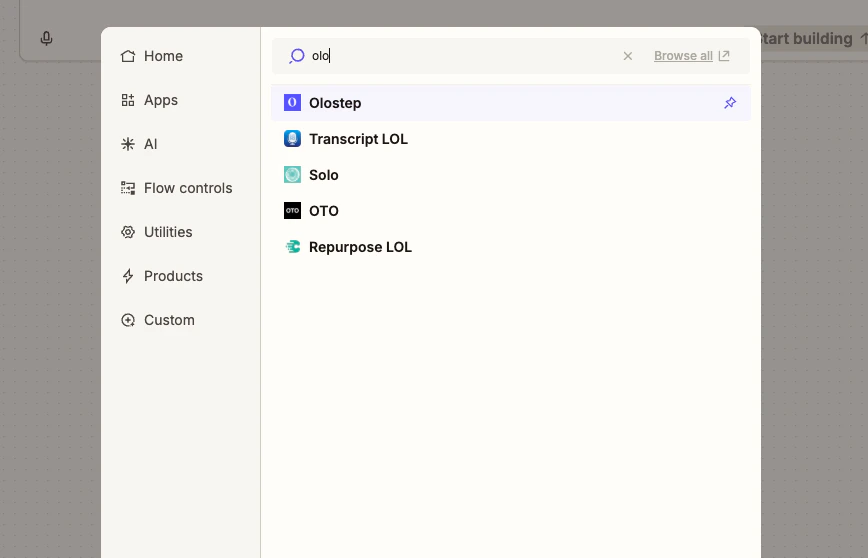

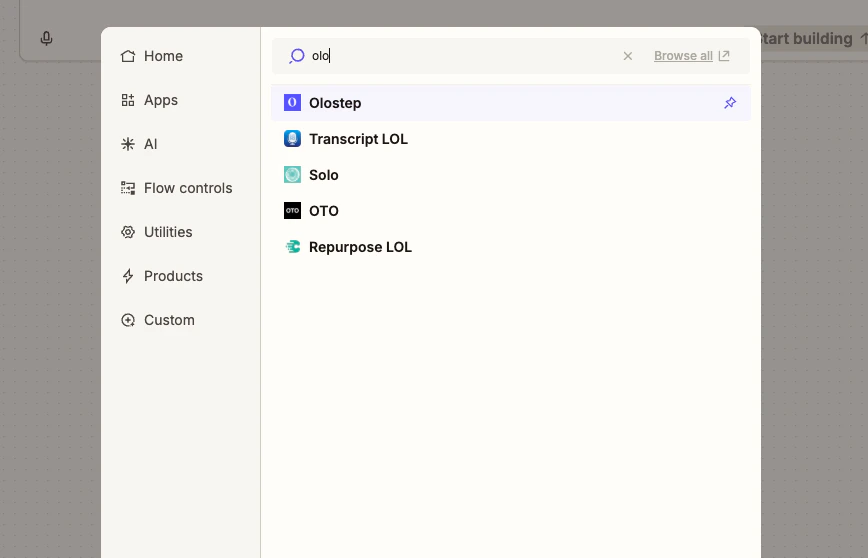

Find Olostep in Zapier

Open any Zap, click + to add an action, and search for Olostep. Select Olostep from the results.

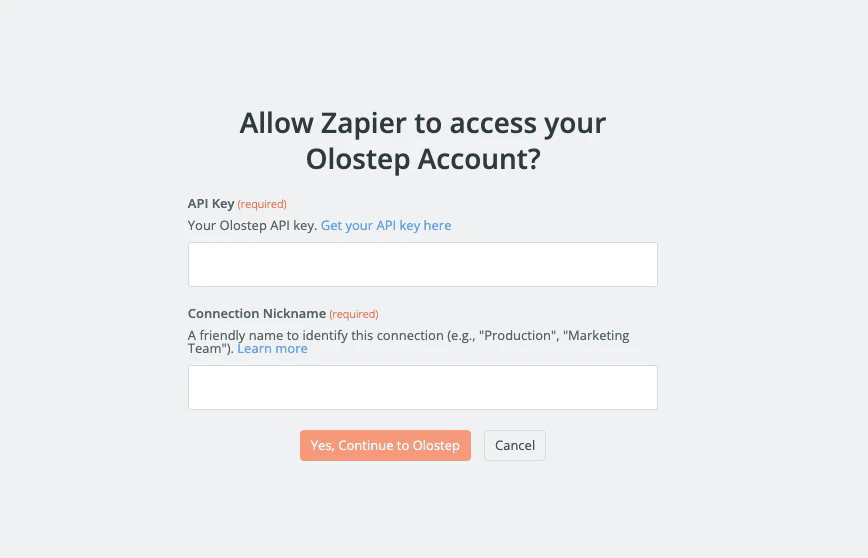

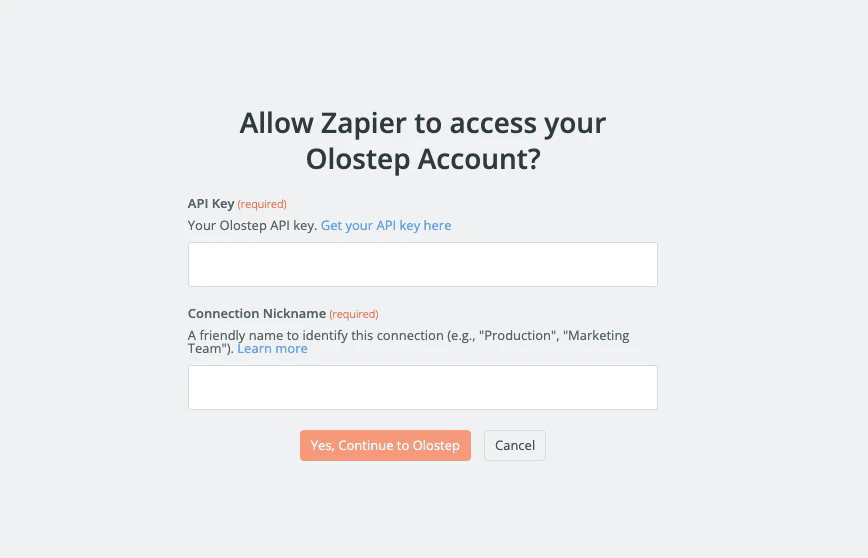

Connect your account

Click Sign in to Olostep. Paste your API key into the field and click Yes, Continue to Olostep. Zapier will verify the key and save the credential for all future Zaps.

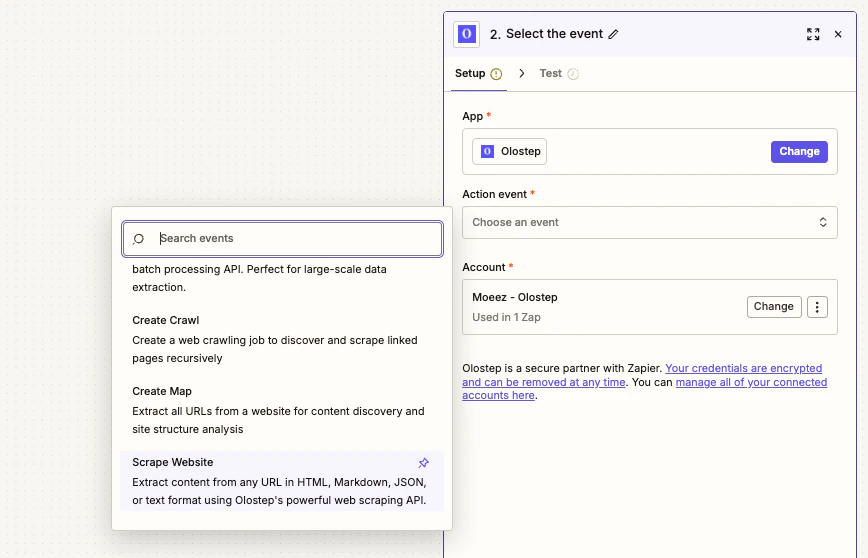

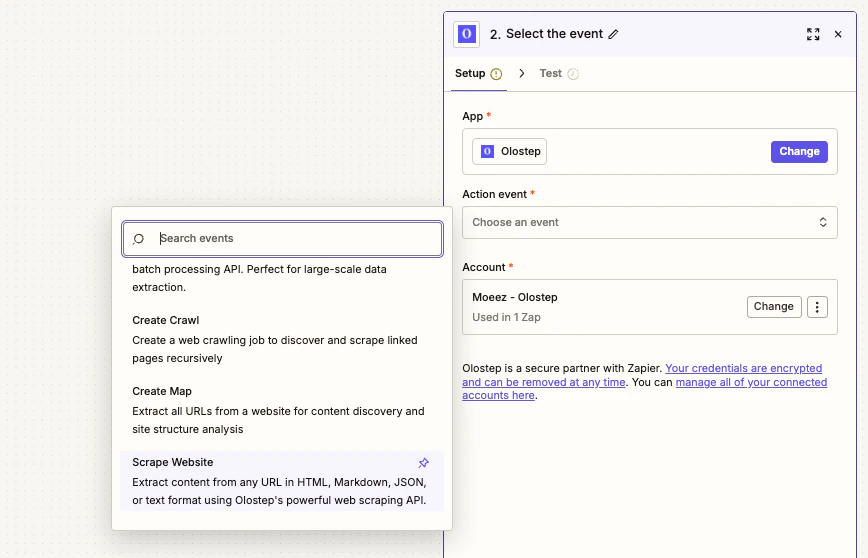

Choose an action

With your account connected, pick one of the five Olostep actions from the Action dropdown and fill in the required fields.

Configure Scrape Website (URL + format)

- URL to Scrape: map it from your trigger or enter manually

-

Output Format: choose

Markdown,HTML,JSON, orText

Actions

Scrape Website

Pull content from any URL as Markdown, HTML, JSON, or plain text. Handles JS-rendered pages with optional wait times and country targeting.

Ask AI Answer

Ask a natural-language question and get a cited answer grounded in pages you provide or a live web search.

Batch Scrape URLs

Submit up to 100,000 URLs in one job, processed in parallel. Returns a

batch_id; retrieve results asynchronously.Create Crawl

Start from a URL, follow links, and scrape all subpages. Good for docs sites, blogs, or full-site ingestion. Returns a

crawl_id.Create Map

Get every URL on a site without scraping content. Use it for discovery before a batch job. Returns a

map_id.Batch, Crawl, and Map are async. Store the returned ID and use a Delay step or a second Zap to retrieve results once processing completes.

Example workflow: Daily competitor briefing

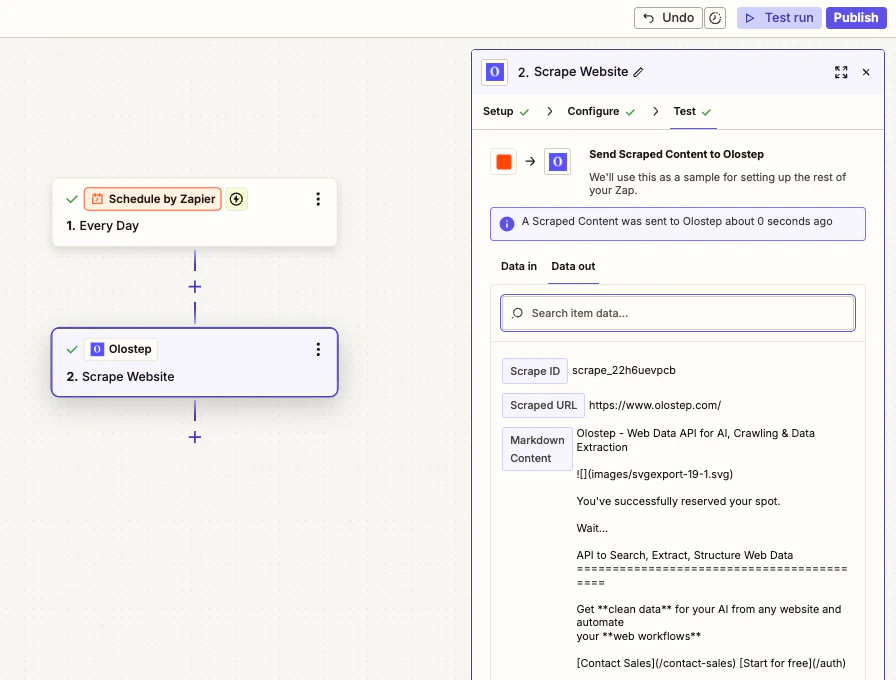

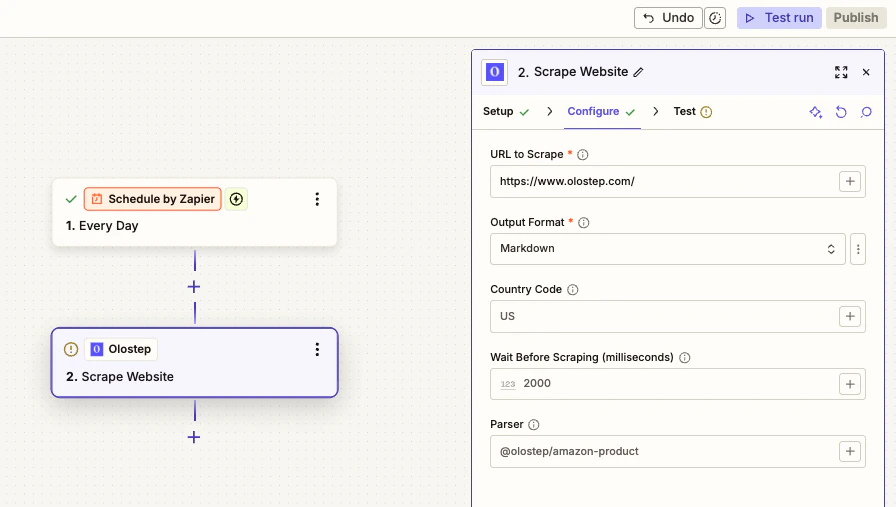

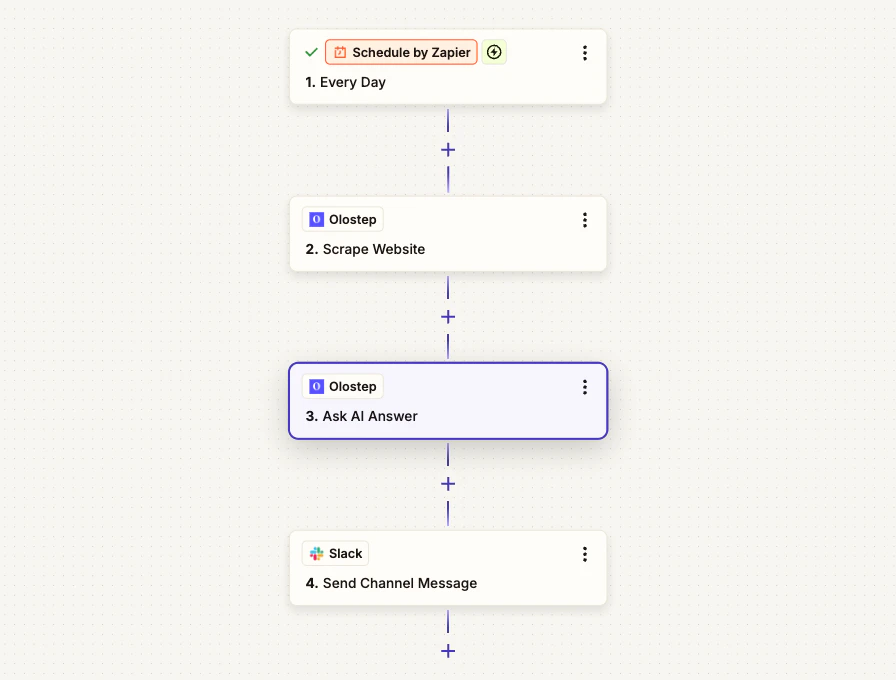

What it does: Every morning, this Zap scrapes a competitor page, asks Olostep AI Answer to summarize key updates from that page, and posts the final briefing with citations to Slack. Nodes used: Schedule by Zapier -> Olostep Scrape Website -> Olostep Ask AI Answer -> Slack

Step 1: Add a Schedule trigger

In Zapier, create a new Zap and set the trigger to Schedule by Zapier. Set it to run Every Day at a time that works for your team (e.g. 8 AM).Step 2: Add Olostep Scrape Website

Add an Olostep action and choose Scrape Website. Set:- URL:

https://competitor.com/blog - Output Format: Markdown

Step 3: Add Olostep Ask AI Answer

Add a second Olostep action and choose Ask AI Answer. Set:- Question:

What are the key updates, announcements, product changes, or pricing changes on this page today? Return a concise briefing with bullet points and include citations. - Context URLs (JSON Array): map the Scraped URL from step 2

- Format: Markdown

- Include Citations: true

Step 4: Send to Slack

Add a Slack action set to Send Channel Message. Map:- Channel:

#competitive-intel - Message Text:

Daily Competitor Briefing - Message Body:

{{Answer (Markdown)}}

What you get

Every morning your Slack channel receives a message like:Daily Competitor BriefingFrom here you can extend this Zap: add a Filter to only post if specific keywords appear, write briefings to Google Sheets for tracking, or run separate Zaps for different competitor URLs.Sources: competitor.com/blog, competitor.com/pricing

- Launched a new integration for ecommerce workflows

- Updated pricing page with a new mid-tier plan

- Published two new blog posts about automation best practices

Parsers

Add a parser ID to the Parser field on any Scrape or Batch action to get structured data instead of raw content:| Parser | Extracts |

|---|---|

@olostep/amazon-product | Title, price, rating, reviews, images, variants |

@olostep/google-search | Result titles, URLs, snippets |

@olostep/google-maps | Business name, address, rating, reviews |

@olostep/extract-emails | Email addresses from any page |

@olostep/extract-socials | Social profile links (X, GitHub, LinkedIn, etc.) |

@olostep/extract-calendars | Google Calendar and ICS links |

Zapier Limitations & Workarounds

Task limits

Zapier counts each action as one task against your plan’s limit. Workaround: Use Batch Scrape URLs to process multiple URLs as a single task instead of looping a single-scrape action.Execution timeout

Zaps timeout after 30 seconds. Crawls and large batch jobs take longer than that. Workaround: Store the returnedcrawl_id or batch_id and retrieve results in a separate Zap triggered by a webhook or a scheduled delay.

Data size limits

Zapier caps the data size that can pass between steps, which can be an issue with large scrape payloads. Workaround: Use hosted output URLs returned by Olostep to fetch large content separately rather than passing raw content between steps.Polling triggers

Most Zapier triggers poll on a 5–15 minute interval, not instantly. Workaround: Use Zapier’s Webhooks trigger for instant notification, or schedule Zaps at fixed times rather than relying on near-real-time polling.Troubleshooting

API key rejected

API key rejected

Copy the key directly from olostep.com/dashboard with no trailing spaces. Disconnect and reconnect the Olostep account in Zapier if the error persists.

Scraped content is empty

Scraped content is empty

Increase Wait Before Scraping (try 2000–5000ms for JS-heavy pages). Confirm the URL is publicly accessible without a login. If a specific domain is consistently failing, contact info@olostep.com.

Batch URL format error

Batch URL format error

The URLs to Scrape field expects a JSON array:Use a Code by Zapier step upstream to build this array from your data if needed.

Rate limit hit

Rate limit hit

Add a Delay step between scrape actions, or switch to Batch Scrape URLs instead of looping single scrapes. Check current usage in the dashboard.

Zap times out on crawl or batch

Zap times out on crawl or batch

These operations are async by design. Store the returned ID immediately after the action, then use a second Zap or a scheduled poll to retrieve results later.

Related

Scrapes API

Full reference for the scrape endpoint

Batches API

How batch jobs work and how to retrieve results

Crawls API

Crawl configuration and result retrieval

Maps API

URL discovery and filtering options

Zapier Website

Zapier platform